Data Strategy for Large Organizations

Before the technical solutions, tackle the organizational alignment

Author’s Note: This is the final article in a three-part series about Data strategy throughout an organization’s lifecycle. In this first article, we focused on the challenges facing early stage start-ups specifically. The second article turned its attention to medium stage start-ups and the unique challenges faced there. Finally, this article addresses large organizations.

In early stage and growing startups, we in the data world tend to traffic in technical solutions to data problems, an instinct that generally serves use well. In the world of enterprise data, we often continue to focus on technical solutions, missing the fact that the problems at this scale are fundamentally organizational problems. As organizations grow beyond 150 people with dedicated technical and data teams, the challenges shift from technical capabilities to organizational dynamics. This article explores why traditional approaches to data management fall short and proposes a fundamental reset in how we think about data strategy.

Note: this article assumes you’ve already implemented the recommendations in the first two articles…if you haven’t, go back and implement those first!

A Tale of Good Intentions Gone Wrong

Alex, the Chief Data Officer at a Fortune 500 retailer, stared at his screen in frustration. Another executive had emailed about broken dashboards—the third this week. The sales analytics his team maintained were showing inconsistent numbers, and the customer engagement metrics hadn't updated in three days.

His team had already diagnosed the issues: upstream data changes in the mobile app's event tracking had broken their pipelines, while new fields added to the CRM system had corrupted several critical reports. The root causes were changes made by other departments, but to the executive team, these were "data problems" that fell squarely on his shoulders.

In the monthly executive committee meeting, Alex presented his vision for solving these persistent issues. He spoke passionately about implementing data mesh architecture, establishing data governance councils, and creating mandatory data contracts between teams. The room grew quiet as he outlined the comprehensive technical solutions he believed would fix their data problems.

The CTO spoke first: "Alex, I appreciate the thorough proposal, but how can we trust these new initiatives when the basic dashboards don't work? Let's fix what's broken before adding more complexity."

The CFO nodded in agreement: "My team needs reliable numbers now. These solutions sound like they'll take months or years."

What Alex and his critics both missed was that they were treating symptoms rather than the disease. The broken dashboards and failed pipelines weren't primarily technical failures—they were the visible symptoms of misaligned organizational incentives.

The mobile app team's success was measured by app store ratings and feature delivery; data compatibility wasn't on their scorecard. The CRM team was racing to implement new sales features, and maintaining stable data schemas was, at best, an afterthought. Alex's data team was caught in the middle, trying to build reliability on top of systems whose owners had no real incentive to provide it.

Six months later, Alex left the company, frustrated and exhausted. His replacement, Sarah, took a different approach, which we’ll see at the end of the article.

The Core Problem: Misaligned Incentives

The elephant in the room for enterprise data strategy is misaligned incentives. While data teams push solutions like data governance, data contracts, and data mesh to manage growing complexity, these are ultimately band-aids that fail if the incentives of all parties are not aligned towards data quality.

Consider a typical scenario: the product team builds a customer-facing application. Their primary mission is delivering features and maintaining system reliability. They also generate valuable data about customer behavior, which the Analytics wants to use for insights. The product views this data as a byproduct, while Analytics sees it as mission-critical.

This misalignment manifests in several ways:

Data producers have little incentive to maintain high data quality

Schema changes are made without considering downstream impacts

Documentation and metadata are treated as afterthoughts

Data reliability is secondary to primary business functions

The Solution: Fix Incentives First

Before implementing any technical solutions, organizations need to align incentives between data producers and consumers. Here are two proven approaches:

Approach 1: Direct Payment Model

Establish a direct transfer payment system where data consumers pay producers for their data. This approach:

Quantifies the true value of internal data

Creates explicit accountability for data quality

Treats internal data like any other resource with associated costs

Encourages producers to invest in data quality and reliability

However, this model can face resistance due to:

Administrative complexity

Concerns about internal market fairness

Additional accounting overhead

Political challenges in large organizations

For example: The Marketing Analytics team needs reliable customer engagement data from the Mobile App team. Instead of just requesting the data, they allocate $200,000 of their annual budget to "purchase" this data feed. This budget covers one dedicated data engineer on the Mobile App team who ensures the data meets agreed-upon quality standards (99.9% uptime, < 1% error rate, schema changes announced 30 days in advance). If quality drops, Marketing can withhold payment and "shop elsewhere" – perhaps by purchasing similar data from the Website team instead. The Mobile App team, seeing the direct revenue from their data, now prioritizes data quality alongside app features. They even start investing in better data tooling because they can clearly justify the ROI based on their "data revenue stream."

Approach 2: OKR Integration

Alternatively, integrate data quality into the organizational OKR (Objectives and Key Results) framework:

Set specific objectives for data reliability and availability

Include data quality metrics in performance evaluations

Tie data-related goals to compensation

Make data quality a shared responsibility

This approach can be easier to implement while still creating the necessary alignment.

Building on Aligned Incentives

Once incentives are aligned, Data Team-led initiatives become much more effective. Here's the recommended implementation order:

Data Governance

Define clear ownership and responsibilities

Establish quality standards

Create feedback mechanisms

Data Contracts

Treat data like APIs

Define clear interfaces and expectations

Version changes properly

Document dependencies

Data Observability

Monitor data quality metrics

Track usage patterns

Alert on anomalies

Measure impact

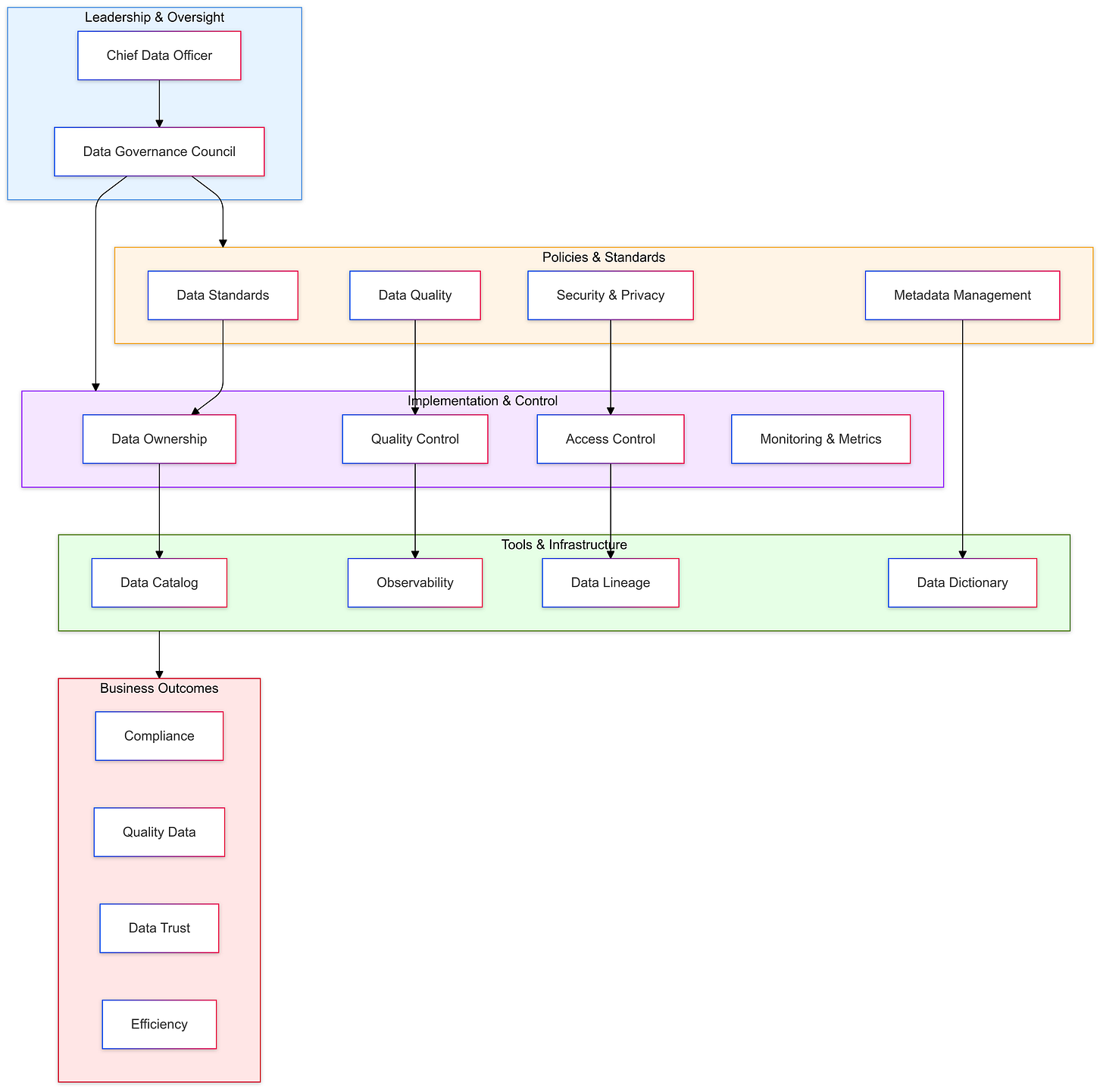

Below is an organizational structure and development diagram for implementing data governance - there are of course many approaches out there, but this diagram gives a simple overview of what is required.

Organizational Structure: Centralized Teams, Business-Driven Priorities

A successful data organization requires careful balance between centralization and business alignment. While data teams should be centrally managed to maintain consistent standards and career development paths, their work must be driven by business unit priorities.

Key principles:

Central data team manages hiring, training, and best practices

Dedicate data professionals to specific business units when possible

Business units define priorities and risk appetite

Avoid splitting data professionals across multiple units

Central team provides technical oversight while business drives roadmap

This structure provides several benefits:

Clear ownership and accountability

Deep business context for data professionals

Consistent technical standards

Focused attention on business priorities

Better career development paths

The Matrix Organization: The Least Bad Option

Let's acknowledge what we're proposing here: a matrix organization where data professionals have both a functional reporting line (to the central data team) and a "dotted line" to business units. If you just felt a shiver run down your spine, you're not alone.

Matrix organizations are often criticized, and for good reason. They create competing priorities, dual reporting structures, and endless alignment meetings. They're complex, political, and frequently frustrating. They're terrible, really—surpassed only by every other organizational structure we've tried.

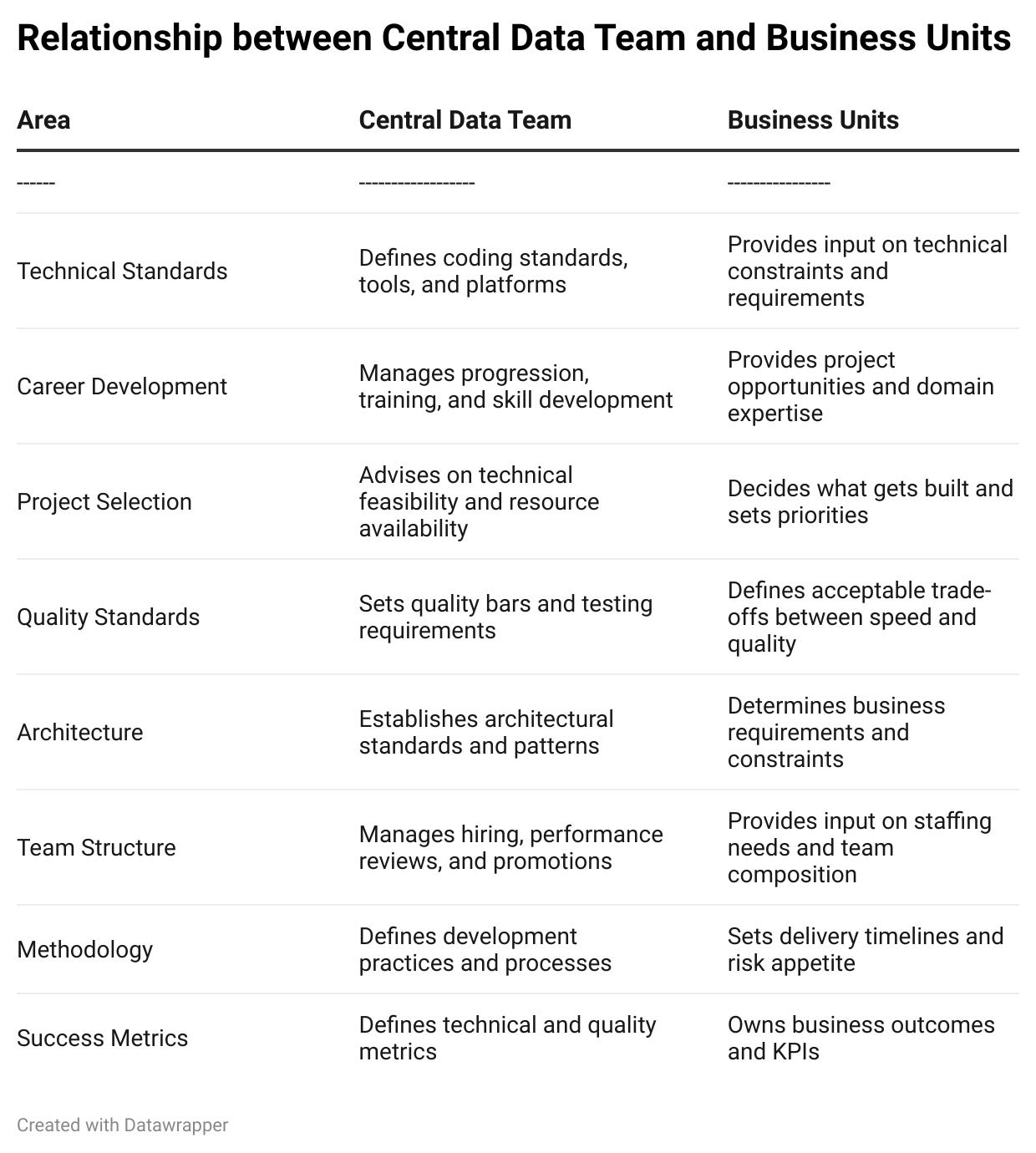

The reality is that data teams need both central oversight and business unit embeddedness. Here's how responsibilities break down:

Yes, you'll still have endless discussions about resource allocation. Yes, your data scientists will sometimes feel torn between functional excellence and business demands. And yes, you'll occasionally wonder if there's a better way.

But take heart: those endless alignment discussions are actually a feature, not a bug. They force explicit trade-offs and keep the organization honest about priorities. Sometimes the best organizational structure isn't the one with the fewest problems—it's the one with the most manageable problems.

Conclusion

The key to successful data strategy in large organizations isn't choosing the right technical solution—it's fixing the incentives first. Whether through direct payment models or integrated OKRs, organizations must align the motivations of data producers with the needs of data consumers.

Only after establishing this foundation should organizations implement technical solutions like governance frameworks, data contracts, and observability tools. This approach leads to sustainable, scalable data practices that deliver real business value.

Remember: Technical solutions can help enforce good practices, but they can't create them. Fix the incentives, and good practices will follow naturally.

Epilogue: The Path Forward

Alex’s replacement, Sarah, took a different approach. Instead of starting with technical solutions, she spent her first month understanding how different teams were measured and rewarded. Her first major initiative wasn't a technical overhaul—it was working with HR and Finance to change how data quality affected team performance metrics and bonuses.

Three years after Alex's departure, the same company's data landscape was unrecognizable. Sarah, his successor, had taken his initial insights about incentives and turned them into organizational reality.

Her first move had seemed counterintuitive to many: she actually reduced the size of the central data team. But simultaneously, she worked with the CFO to establish a new budgeting model that treated data as an internal product. Teams that produced critical data others relied on received additional headcount and resources—paid for by the consuming teams' budgets.

The mobile app team, once notorious for breaking dashboards with unannounced changes to event tracking and user analytics, now had two dedicated data engineers. Their salaries were covered by a combination of funding from Marketing, Product Analytics, and Customer Success—the teams that most heavily relied on their user behavior data. More importantly, their year-end bonuses were tied directly to data reliability metrics and consumer satisfaction scores.

The CRM team's product managers now included data contract compliance in their OKRs, weighted as heavily as feature delivery. They had initially pushed back, arguing this would slow down development. But when they started receiving recognition (and compensation) for maintaining stable, well-documented data interfaces, the culture shifted. They began to take pride in their data quality metrics the same way they had always celebrated uptime statistics.

In a particularly satisfying moment, Sarah was presenting her quarterly review when the CTO—the same one who had doubted Alex's vision years ago—interrupted with a revelation: "You know what I just realized? We haven't had a major data incident in over six months. Whatever you did with those team incentives, it worked."

Sarah smiled, thinking of Alex. The technical solutions he had proposed—data mesh, governance, contracts—were all now in place and working smoothly. But they worked because they were building on a foundation of aligned incentives, not trying to compress order onto chaos. The tools, she had learned, don't create the culture; they just help formalize it.